Alumni

Bio

Daniel Csillag currently studies mathematics and computer graphics at Instituto Nacional de Matemática Pura e Aplicada (IMPA), Brazil. Some of his current projects include automatic facial expression synthesis and an efficient 3D renderer. In his free time, he enjoys practicing Ninjutsu, Judo, Brazillian Jiu-Jitsu, Capoeira and Taekwondo. He enjoys classical music and plays piano, flute and classical guitar.

Computational Essay: Implicit Curves in Computer Graphics

Project: OCR for Sheet Music

Goal

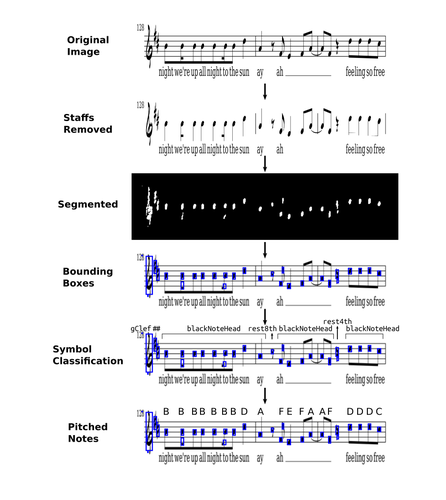

Given an image of a page of printed sheet music, interpret it, generating some internal representation, which can then be played or passed to some musical notation software.

Summary of Results

We find all the staff lines in an input image in it via BottomHatTransform. We then split it into subimages of the staffs (groups of 5 staff lines) and remove the lines using classical morphological image operations. Then, a SegNet trained on the DeepScores segmentation dataset is used to highlight musical symbols, whose bounding boxes are then found using ComponentMeasurements on the Binarize and Blur of the segmented image. This is then passed to a classifier trained on the DeepScores classification dataset to classify the bounding boxes, and the notes are pitched based on their distance from the bottom of the staff (and the clef).

Future Work

- Rhythm classification: train a classifier that recognizes the rhythm by extending the bounding box for notes

- Handwritten OMR: build a system that works on handwritten sheet music

- More symbols: slurs, fingering, crescendos, diminuendos, etc.

- 24th Annual Wolfram Summer School

- Bentley University, Waltham, MA, USA

- June–July, 2026